How To Download An Array From Chrome Dev Tools UPDATED

How To Download An Array From Chrome Dev Tools

by Praveen Dubey

How to use the browser console to scrape and save data in a file with JavaScript

A while back I had to clamber a site for links, and further use those folio links to crawl information using selenium or puppeteer. Setup for the content on the site was bit uncanny then I couldn't beginning directly with selenium and node. Too, unfortunately, information was huge on the site. I had to quickly come up with an approach to start crawl all the links and pass those for details crawling of each folio.

That's where I learned this cool stuff with the browser Panel API. You tin employ this on any website without much setup, as it'south just JavaScript.

Let'southward leap into the technical details.

High Level Overview

For itch all the links on a page, I wrote a modest slice of JS in the panel. This JavaScript crawls all the links (takes one–ii hours, as it does pagination likewise) and dumps a json file with all the crawled data. The thing to keep in mind is that you need to make certain the website works similarly to a single page application. Otherwise, information technology does non reload the folio if you want to crawl more than than ane page. If information technology does not, your panel code volition be gone.

Medium does non refresh the page for some scenarios. For at present, let'south crawl a story and save the scraped data in a file from the console automatically later on scrapping.

Only before we do that here's a quick demo of the final execution.

1. Get the console object case from the browser

// Console API to clear console before logging new data console.API; if (typeof console._commandLineAPI !== 'undefined') { console.API = console._commandLineAPI; //chrome } else if (typeof console._inspectorCommandLineAPI !== 'undefined'){ console.API = console._inspectorCommandLineAPI; //Safari } else if (typeof panel.clear !== 'undefined') { console.API = console; } The lawmaking is simply trying to get the console object instance based on the user's current browser. Y'all can ignore and straight assign the instance to your browser.

Example, if you lot using Chrome, the below code should exist sufficient.

if (typeof console._commandLineAPI !== 'undefined') { console.API = console._commandLineAPI; //chrome } 2. Defining the Junior helper role

I'll assume that yous take opened a Medium story every bit of now in your browser. Lines 6 to 12 define the DOM element attributes which can be used to extract story title, clap count, user name, profile epitome URL, profile clarification and read time of the story, respectively.

These are the basic things which I want to show for this story. You tin add a few more elements like extracting links from the story, all images, or embed links.

iii. Defining our Senior helper function — the beast

As we are crawling the folio for different elements, we will relieve them in a collection. This collection volition be passed to 1 of the main functions.

We have defined a function name, panel.save. The task for this function is to dump a csv / json file with the data passed.

It creates a Blob Object with our data. A Blob object represents a file-similar object of immutable, raw data. Blobs represent data that isn't necessarily in a JavaScript-native format.

Create blob is attached to a link tag <;a> on which a click consequence is triggered.

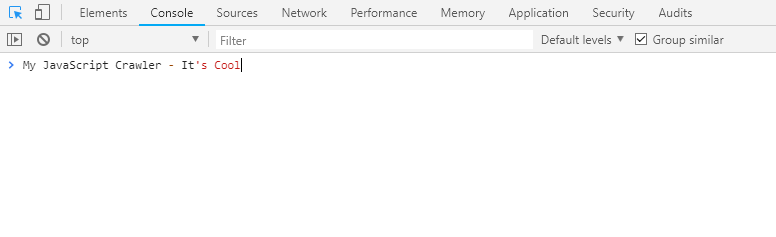

Here is the quick demo of console.save with a small array passed as data.

Putting together all the pieces of the code, this is what we have:

- Panel API Instance

- Helper role to extract elements

- Console Save function to create a file

Let's execute our console.save() in the browser to save the data in a file. For this, you tin go to a story on Medium and execute this code in the browser panel.

I accept shown the demo with extracting data from a single folio, but the same code can exist tweaked to crawl multiple stories from a publisher'due south abode folio. Take an case of freeCodeCamp: y'all tin navigate from ane story to another and come back (using the browser's back push) to the publisher home page without the folio being refreshed.

Below is the bare minimum code you need to excerpt multiple stories from a publisher's home folio.

Let's come across the code in activity for getting the profile description from multiple stories.

For any such type of application, once you have scrapped the data, yous tin pass it to our console.salvage function and store information technology in a file.

The console save office tin be apace attached to your console code and can assistance you to dump the data in the file. I am not saying you lot have to use the console for scraping data, simply sometimes this will exist a style quicker approach since we all are very familiar working with the DOM using CSS selectors.

Y'all can download the code from Github

Thank you for reading this article! Hope it gave you absurd idea to scrape some data chop-chop without much setup. Hit the clap button if information technology enjoyed it! If you have any questions, send me an email (praveend806 [at] gmail [dot] com).

Resources to acquire more about the Console:

Using the Console | Tools for Spider web Developers | Google Developers

Learn how to navigate the Chrome DevTools JavaScript Console.developers.google.comBrowser Panel

The Browser Panel is like the Web Console, but practical to the whole browser rather than a unmarried content tab.programmer.mozilla.orgHulk

A Blob object represents a file-like object of immutable, raw data. Blobs correspond information that isn't necessarily in a…developer.mozilla.org

Acquire to code for gratis. freeCodeCamp's open source curriculum has helped more than than 40,000 people get jobs as developers. Get started

DOWNLOAD HERE

Posted by: farleysirove.blogspot.com